Issue #63 // When Absence Is Information—Missing Data in Proteomics

Distinguishing technical biases from biological signals and handling MCAR vs MNAR appropriately.

Enjoy this piece? Whow your support by tapping the 🖤 in the header above. It’s a small gesture that goes a long way in helping me understand what you value and in growing this newsletter. Thanks!

Issue № 63 // When Absence Is Information—Missing Data in Proteomics

I first started coding seriously during COVID-19 lockdowns, shortly after co-founding a wearable technology company, NNOXX. At the time my primary interest was analyzing time-series physiological data, which quickly evolved into building ML-based models to predict fatigue status, MSK injury risk, and other factors using distributed sensor networks. Early into my PhD though, my interests shifted from applied machine learning—building and deploying models for tasks like prediction, decision making, and pattern recognition—to data-driven research, specifically focusing on computational cancer biology. With this transition came unanticipated second-order effects.

When I was focusing on ML, I spent a lot of time thinking about how to prepare and preprocess data in such a way as to best exposure the underlying structure of a given prediction problem to an algorithm to achieve the best performance. Then, when my attention shift to research, I found myself thinking more about the nuances of how to best clean data to preserve biological signal without introducing noise or spurious association in down stream analysis. In theory, these two things aren’t so different—after all, data cleaning is data cleaning—but, as I’ve recently come full circle something clicked for me. Perhaps this is obvious to some of you reading, but there are different constraints and tradeoffs when cleaning or preparing a given dataset for applied ML and data-driven research. In this piece I’m going to discuss some of those differences as it applies to working with proteomic data, specifically in how we handle missing data. But, before getting into the weeds I want to back up and clarify what I mean when I say data cleaning.

At the simplest level, data cleaning is the process of identifying and correcting systematic errors in your data. For example, data cleaning could include correcting mistyped data, removing corrupted, missing, or duplicate data points, and sometimes adding missing data values back in. Data cleaning may also include tasks such as encoding categorical variables into binary variables (i.e., turning no/yes into 0/1). For what may seem like a rote task, data cleaning actually requires a fairly high degree of domain expertise. For example, let’s say you’re working with a dataset where vO2 (volume of oxygen consumption) is one of your features and you notice a row where the value is 92 ml/kg/min. While not impossible, as a subject matter expert you may realize how improbably this value is, whereas someone without that expertise may overlook it.

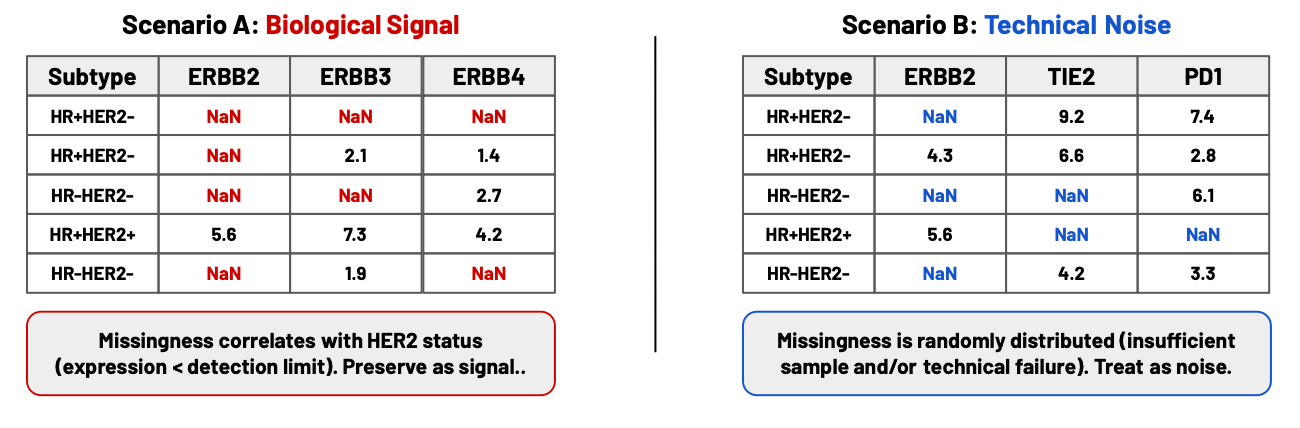

Similarly, when looking at missing values in proteomic data, you may spot a pattern to the missingness, which can give clues as to whether these values should be dropped, whether you can impute values in, or whether you should leave them be. For example, you may notice that in a dataset of 500 breast cancer patients there are NaN values for the protein ERBB2 across ~15% of the samples. Upon closer inspection, you may see that those samples correspond to patients classified as HR+HER2- and HR-HER2-, suggesting that missingess is due to protein expression being below the detection threshold. Alternately, suppose you saw these same ERBB2 values missing, but with no clear separation by patient classification. In this case, maybe those NaN samples happened to correspond to patient samples (stored in rows) where many of the corresponding protein columns are blank. This might suggest that there was not enough tumor sample to process, and as a result this type of "missingeness" is technical, not biological.

Notice that identifying the cause of missing data isn’t just about looking at the data and finding patterns. You also have sufficiently understand the experimental context, the underlying biology, and potential sources of technical failure, whether during biological sample acquisition or sequencing. It’s for these reasons that I don’t advise fully automating data cleaning or outsourcing it to coding agents. While they may better understanding the statistical nature of your dataset, they often lack the context dependence to (a) understand the cause of missingness and (b) how to address it in a specific problem scenario. Importantly, these are two different skills; understanding the biological of your experimental condition and the sequencing technology generating your data allows you to solve the first problem. But, in order to figure out how to proceed after figuring out why your data is missing you need to understand the assumptions of the analyses you plan to perform.

Unlike missing data in many other contexts, missingness in proteomics is seldom random, and when it is it’s often hard to identify. Additionally, the appropriate strategy for handling different types of missingness differs between machine learning applications (where the goal is prediction) and data-driven research (where the goal is biological interpretation). This creates a tension between best practices for avoiding data leakage during ML applications (which typically means handling missing data after splitting the dataset) and the practical realities of working with proteomics data. In proteomics experiments, missing values typically result from one of two sources:

Missing completely at random (MCAR): Some samples may have a high proportion of missing protein measurements (e.g., >50% of proteins unmeasured) due to insufficient tumor material, poor sample quality, or technical failures during sample processing. These samples represent failed experiments rather than a biological signal and should be removed from the dataset entirely before model development if the goal is prediction (this removal is a quality control step and does not constitute data leakage).

Missing not at random (MNAR): Individual proteins may be missing in specific samples because their expression levels fall below the instrument’s detection limit. This is a common source of missingness in proteomics data and is informationally rich—the absence of a measurement often indicates very low or absent protein expression, which may be biologically and clinically relevant. For example, the absence of a measurable signal for an oncogene in a tumor sample might indicate good prognosis.

For machine learning and predictive modeling applications a standard approach is to dealing with for MNAR-based missingness is to impute missing values with a small fraction of the protein-specific minimum detected value (typically 10% of the minimum for linear-scale data, or minimum minus 1-2 units for log-transformed data). This approach is biologically justified because it represents a plausible lower bound for expression (the protein is present but below detection) and preserves the biological information that "this protein is very low in this sample". Additionally, this approach is protein-specific, respecting that different proteins have different detection ranges and avoids the assumption that missing = zero, which would often be an incorrect interpretation. For log-transformed proteomics data (such as log2-scaled measurements), imputing with the protein-specific minimum minus 1.0 in log space corresponds to expression approximately half as abundant as the minimum detected level in linear space, which represents a biologically reasonable lower bound.

Did you find this piece helpful? Subscribe for free to have the next post delivered to your inbox:

The challenge for machine learning workflows is that this imputation ideally should occur before train-test splitting, which appears to violate the principle of preventing information leakage from test to training data. However, this pre-split imputation is widely implemented in the proteomics biomarker literature for several reasons. For starters, the imputation is based on biological knowledge (below detection = very low) rather than statistical properties of the full dataset. Additionally, the calculation uses only protein-specific minima, not cross-sample statistics that would truly constitute leakage. Most importantly though, attempting to impute separately within train/validation/test splits can lead to different imputation schemes for the same protein across splits, which is biologically inconsistent.

Of course, there is an alternative to imputation. We could also just remove all proteins with any missing values. The problem with this approach is that in most cases it would eliminate nearly the entire dataset as some degree of below-detection missingness is nearly universal in proteomics. As a result, for machine learning applications, I often use the following three stage approach:

Stage 1: Remove samples with >20% missing proteins (quality control for MCAR) before splitting. This stage removes samples with insufficient tumor material or severe technical failures, excluding poor-quality samples that don’t represent successful experiments.

Stage 2: Remove proteins with >20% missing values before splitting. After removing poor-quality samples, this stage identifies and removes proteins that are frequently unmeasured across the remaining high-quality samples. Proteins missing in more than 20% of samples likely reflect unreliable assays or technical measurement issues (MCAR) rather than biological signals. This ensures that the feature set consists only of proteins that can be reliably measured (after removing bad samples, high missingness in a protein indicates a fundamental measurement problem rather than sample-specific issues).

Stage 3: Impute remaining sparse missing values (representing MNAR below-detection measurements) before splitting. At this point, remaining missing values are sparse (typically <10% per protein) and likely represent true biological phenomena where specific samples have expression below the detection limit. For log-transformed data, impute with the protein-specific minimum log value minus 1.0 (corresponding to half the minimum expression in linear space). For linear-scale data, use 10% of the protein-specific minimum. While this technically uses information from the full dataset, it is justified by the biological nature of the imputation and the practical impossibility of the alternative (we can’t train models with missing values).

For hypothesis-driven research and differential expression analysis (DEA), the approach to handling missingness is fundamentally different. DEA methods are specifically designed to handle missing values appropriately, and many can accommodate proteins with missingness in a subset of samples. Importantly, because the goal is to explain biology rather than build a predictive model, imputation is often undesirable—introducing imputed values can obscure true biological patterns and create artificial signals. Instead, a Perseus-style filtering approach is typically preferred: remove proteins with excessive missingness (e.g., missing in >70% of samples within a condition or across all samples, for example) while retaining proteins with moderate missingness that still provide biological information. This preserves the interpretability of the results while ensuring adequate data quality.

For normal data-driven analyses with continuous expression data, I handle zeros (representing samples with expression too low to detect) differently depending on context. When I have untransformed data and I’m confident that zeros represent true below-detection expression rather than technical artifacts, I typically impute these with 10% of the protein-specific minimum value. However, if the source of missingness is ambiguous—I cannot distinguish whether it results from biological low expression or technical failure—I do not impute. Similarly, if the data has already been transformed before I receive it, I avoid imputation since I lack the information needed to apply biologically appropriate imputation strategies.

Regardless of how you handle this approach you have to be clear in documenting your rationale, explaining all three types of missingness (sample-level MCAR, protein-level MCAR, and MNAR) and justifying your choice of imputation versus filtering based on your analytical goals. For machine learning applications, You should also justify the pre-split imputation based on the MNAR nature of below-detection measurements and acknowledge that this represents a compromise between statistical ideals and biological realities . When possible, it’s also helpful perform sensitivity analyses showing that your results are robust to alternative imputation strategies. For other omics data types (transcriptomics, metabolomics), similar principles apply, though the specific imputation strategies may differ based on the measurement technology and the meaning of missingness in that context. The key is always to understand the biological and technical sources of missingness in your specific dataset and to choose imputation strategies that respect the underlying biology while minimizing the risk of introducing spurious patterns that could compromise model generalization.

This is really neat stuff! Have you tried using generative models for filling in those missing data points? Something as simple as PCA can use the covariance structure of the actual data to identify what those missing values might be. I think this would be more ideal for data with a stronger autocorrelation structure than what you are describing here, since the usual successful applications I've seen of the PCA method are with time-series data. Just a thought!